Composio Analysis Reveals OpenClaw Agent Security Vulnerabilities

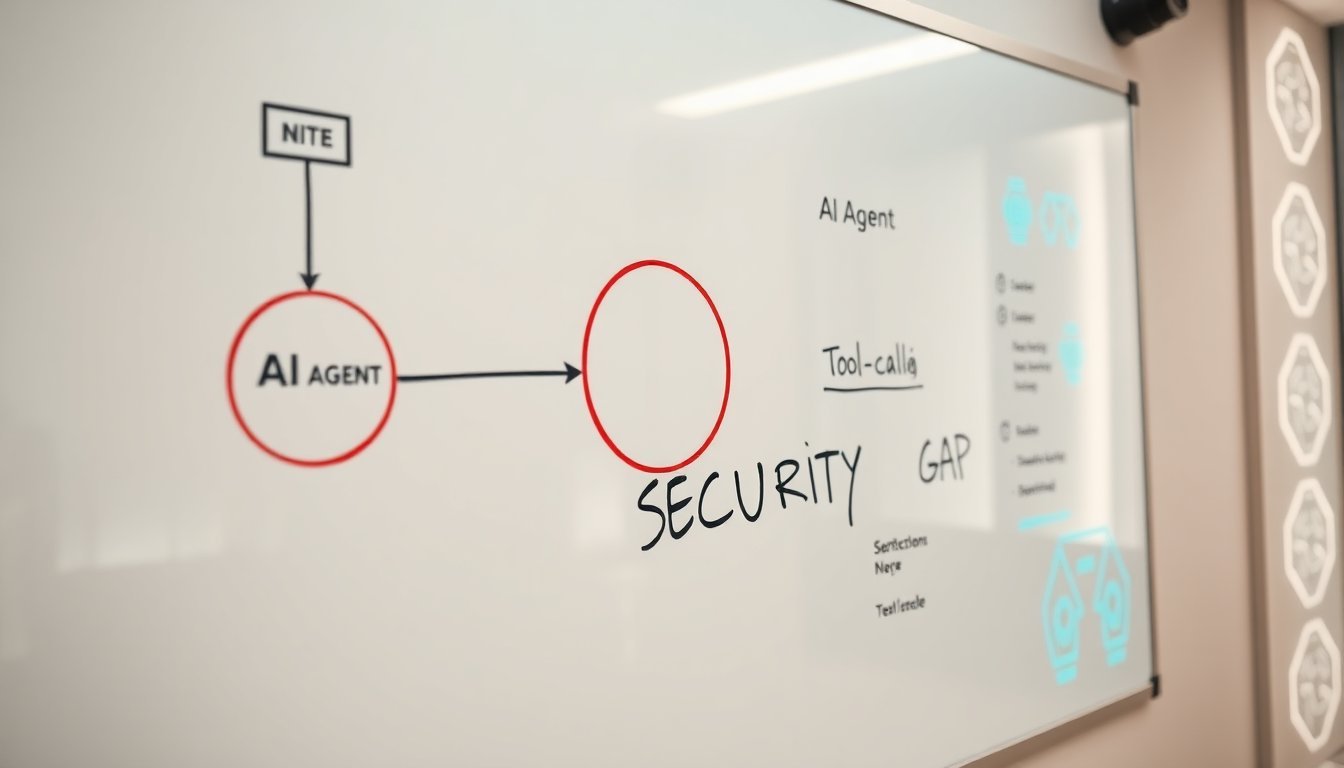

Composio's technical analysis reveals that the OpenClaw agent framework contains inherent security flaws in its tool-calling and execution architecture. These vulnerabilities represent a systemic risk for enterprises adopting agentic AI without proper security guardrails.

Composio's security team documented how OpenClaw's architecture, designed for seamless tool integration, creates exploitable attack surfaces that could allow malicious actors to hijack agent workflows, exfiltrate sensitive data, or execute unauthorized actions. This isn't a theoretical bug report—it's a structural critique of how we're building the next layer of software.

The report, sourced from Hacker News and published on March 22, 2026, dissects OpenClaw's core mechanics. The framework, which enables large language models to execute code and interact with external APIs, reportedly lacks sufficient isolation between the agent's reasoning layer and its execution environment. According to Composio, this design allows for prompt injection attacks that can bypass intended safeguards and for malicious tool outputs to poison an agent's subsequent decisions.

What Happened: A Framework Under the Microscope

Composio, a company specializing in tooling for AI agent development, conducted a security audit of the OpenClaw framework. Their findings were not about a single patchable bug but about architectural choices that prioritize flexibility over security. The analysis details how an attacker could craft inputs that cause the agent to execute unintended API calls, access resources outside its permission scope, or leak context from previous interactions.

This moves the conversation from "if" an agent can be compromised to "how easily." The vulnerabilities are baked into the connective tissue—the very tool-calling functionality that makes OpenClaw powerful. When an agent can read your email, edit a spreadsheet, and place an order with a single chain of thought, a breach in its tool-use protocol isn't a minor flaw; it's a master key.

Why This Matters: The Foundation of Agentic AI Is Cracked

The implications extend far beyond one open-source project. OpenClaw represents a class of frameworks that are becoming the standard substrate for enterprise AI agents. Banks are prototyping customer service agents built on similar stacks. Logistics firms are testing supply chain optimizers. If the foundational layer is insecure, every application built atop it inherits that risk.

This creates a direct conflict between two core promises of AI agents: autonomy and safety. To be useful, agents need broad capabilities and the ability to chain actions. To be safe, they need tight constraints and verifiable execution paths. Composio's report suggests OpenClaw, and by extension much of the current design paradigm, has overwhelmingly favored the former. The industry is building skyscrapers on sand, celebrating the height while ignoring the shaky foundation.

The People and Context: A Wake-Up Call for Builders

Composio's decision to publish this analysis is significant. As a vendor in the agent tooling space, they have a vested interest in a secure and thriving ecosystem. This public critique signals that responsible players see the current trajectory as unsustainable. It's a corrective from within the builder community, aimed at peers who may be moving too fast.

The context is a market frenzy. Venture capital is pouring into agent startups. Every major cloud provider is launching an "Agentic AI" suite. In this gold rush, security is often relegated to a future phase, a problem for "post-MVP" scaling. Composio's report is a stark reminder that for software that acts in the real world, security isn't a feature—it's the first requirement. The labs and companies pushing these frameworks have a responsibility to bake in security by design, not bolt it on as an afterthought.

What Happens Next: The Road to Trustworthy Agents

The immediate next step is a response from the OpenClaw maintainers and the broader open-source community. Will they treat this as a critical design review and initiate a major architectural refactor? Or will they downplay it as a theoretical concern? The reaction will set a precedent for how seriously the field takes security audits.

Longer term, this analysis will force a reckoning on standards. We are likely to see the emergence of security-focused forks of popular frameworks, increased demand for formal verification of agent action chains, and potentially new regulatory scrutiny. Enterprise adopters will start demanding third-party security audits for any agent framework before integration. The era of trusting agent code because it's "just a prototype" is over. Composio has effectively sounded the alarm that the prototype is now production software, with all the attendant risks.

Source and attribution

Hacker News

OpenClaw Is a Security Nightmare Dressed Up as a Daydream

Discussion

Add a comment