Why AI Agents Are The Perfect Solution For Problems We Created Ourselves

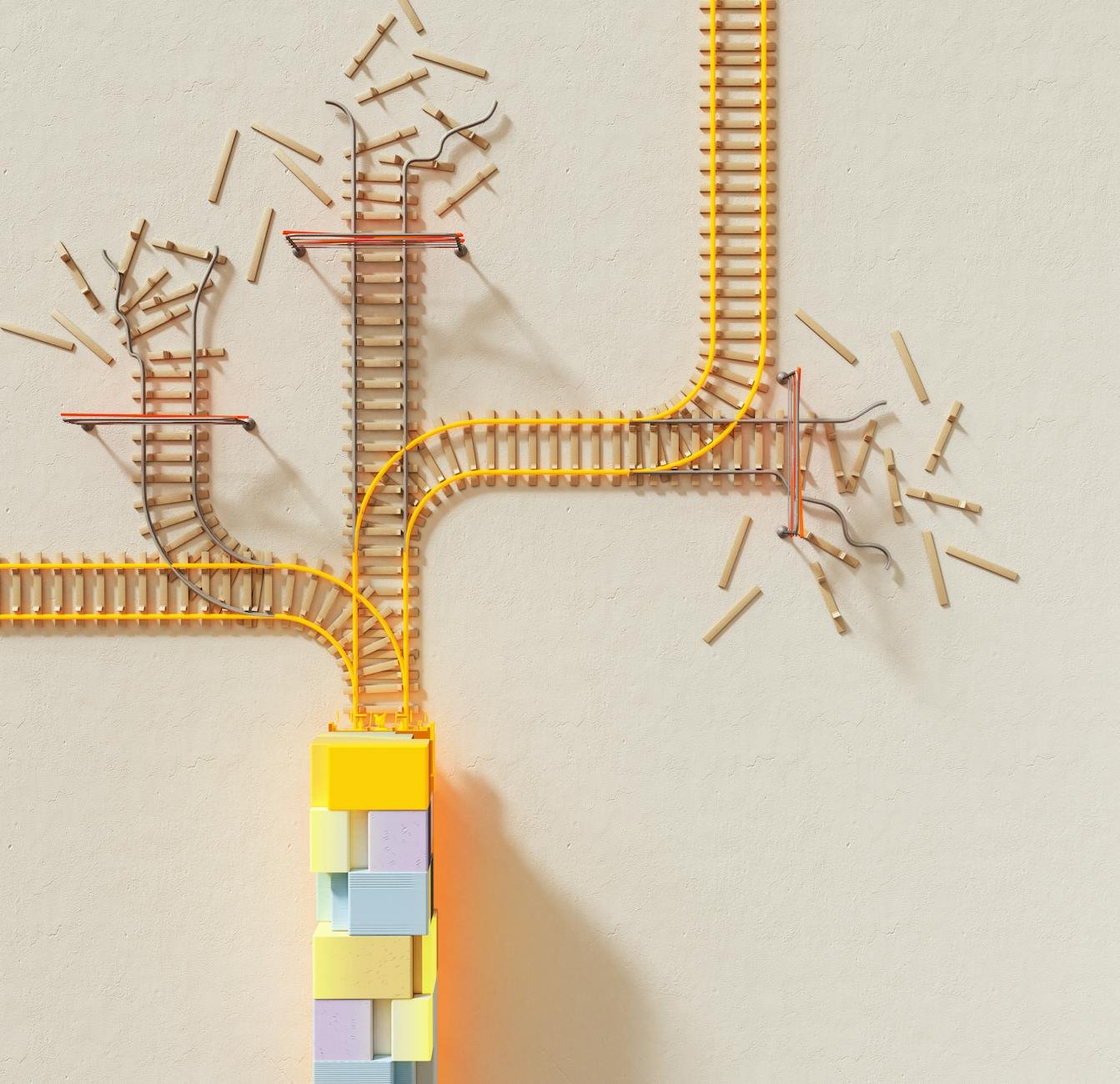

The GitHub trending project Kwaak promises to use AI agents to tackle technical debt - the programming equivalent of using a flamethrower to clean your kitchen. Because what could possibly go wrong when you automate the cleanup of your automation's mess?